Lei Feng Network: This article comes from DeeperBlue.

Lei Feng Net Note: screenshots from the dark official website

At 00:01 in the morning of August 16, 2016, the “dark black†APP developed by Hunan Oufei Network Technology Co., Ltd. went public on the Android platform. The "deep black" self profile is as follows:

“The amazing mobile app for art lovers makes your photos instantly transform into art style works. Unlike traditional filters, Deep Black is based on artificial intelligence. Each style is trained and created by real artists. With a click, you can get different artistic styles that are amazing.â€

The terms “based on artificial intelligence†and “different from traditional filtersâ€, combined with the style of the work, are reminiscent of the 2016 fire, the second only to Pishman Go's app Prisma.

Deep black work display. Picture source: oandf.cn

Prisma reflects the era of artificial intelligence. People want to use computers instead of the ambitions of a hand-painted artist. Impressionism, Fauvism, Ukiyoe, Pop, Deconstruction, and once art style are all unpredictable concepts in the artist's mind. In the era of artificial intelligence, all artistic styles have been proved to be "quantitative", and through machine learning, new works can be continuously produced .

Between quantification (mathematics) and style (art), it is time for condolences. The most diligent oil painter, Leonardo da Vinci, took a week to draw an ordinary work. In the era of artificial intelligence, this time was less than 20 seconds.

With the release of the Chinese version of Prisma, we are taking a look at the image application of deep learning today.

| Tune your computer to Van Gogh It seems that the European countries have a more mass base for art than other regions. As early as the year of 2016 Prisma fire, there were three German researchers who wanted to tune computers into Van Gogh.

The three researchers were named Leon Gatys, Alexander Ecker and Matthias Bethge, from the University of Tübingen, Germany. ) The Bethge Lab. They developed an algorithm that simulates the processing of human vision. This is done by training multiple layers of convolutional neural networks (CNN) to allow the computer to recognize and learn the van Gogh's "style" and turn any ordinary photo into Van Gogh's "Starry Sky."

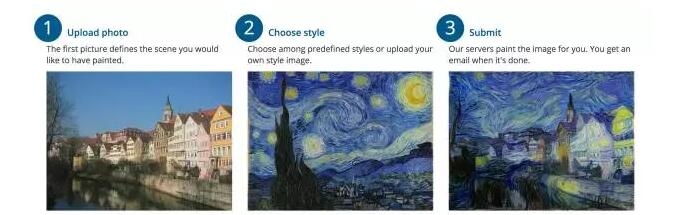

The Deep Art home page posted the steps to get a "Van Gogh style" picture. The first step is to absorb the photos taken by the user. The second step is to let the computer learn the style of the sky map. In the third step, the computer outputs its own "new painting." Image Source: https://deepart.io/

In the human visual system, the concept of seeing an entity from the eye to forming an image in the brain undergoes the transmission of countless layers of neurons in the middle. The information acquired by the underlying neurons is specific, and the higher the abstraction is, the higher the level is.

The three Germans found that if they simulated the network with computers and analyzed the structure of each layer, they could see that during the sampling process, the underlying network was particularly clear about the details of the image, and the more it was retained by higher-level pixels. Less, more contour information.

Deep in the so-called Deep Learning means the number of layers. Each layer of the neural network extracts image features, and the “artistic style†is a superposition of the extraction results of each layer.

The three Germans wrote two papers on their findings: "A Neural Algorithm of Artistic Style" and "Texture Synthesis Using Convolutional Neural Networks". †caused a great deal of discussion in the academic circle.

“At first, we just wanted to create something new about neuroscience. The state of the art artificial neural network has many similarities to the human visual system. So later we thought that we could do more interesting processing of pictures.†Ang Geithis said to Deep Blue Deeper Blue.

Shortly after the publication of the paper, they established a startup company named Deep Art that began to implement the ideas they proposed in the paper.

(Deep Art website, image production interface. Deep Art offers a variety of artistic style options, after the completion of the image production must be sent to the user's mailbox. Picture source: deepart.io)

Users upload their photos on the Deep Art webpage, and then create new paintings through the "Van Gothic Robot" provided by Deep Art. The whole process needs to wait a few hours for the computer to perform data operations and processing. Users can choose works with different levels of clarity. Users can spend 19 Euros to buy a piece suitable for postcards, or spend more than 100 Euros to buy a large-scale oil painting.

What Leo Gettys did was not Meitu Xiu's filter. Before Deep Art came out, there have been many applications of Monet and Van Gogh filter, but the core principles and Deep Art are completely different, such as Mobile Monet, Van Gogh Camera, which was introduced in 2010.

Camera Monet and Van Gogh Camera interface display. Both of these filter software can talk about the user's photo rendering into an artistic effect. But the core principles and the convolutional neural networks used by Deep Art are completely different. (dark blue cartography).

If we put the same image in the Van Gogh Camera, the Van Gogh Camera will calculate every pixel in the graph according to the “formula†built in by the programmer, and finally output a Van Gogh-style photo. But as long as we want to change the picture style from Van Gogh to Picasso, the programmer must rewrite a set of code to change the calculation "formula."

In Deep Art, the programmer who writes the "formula" is a convolutional neural network (CNN). By simply inputting Van Gogh's "Starry Sky", the convolutional neural network can automatically extract the style features of this painting and quantify it into Specific formula. In other words, all works in the history of art can be used as a filter source.

"Convolutional neural networks can be viewed as a machine artist," said Leon Goettis.

| From Germany to RussiaIn early 2016, Russian computer engineer Alexei Moiseyenkov read the three German papers. He keenly smelled that the Germans did not do enough. This technology is still a blank in the consumer market.

He then set up a four-person team and developed Prisma, which strives to be: free, faster, and easier. "Two months to study mathematical models, one and a half months to develop." Moysheyenko said.

“Prisma commercialized this technology for the first time. They took full account of the rapid growth in smartphone coverage and meticulously studied user behavior. Prisma access is on the order of 100 millionth of the market.†The Moscow Times reports They: "Who seized the needs of users, whoever can become a billionaire."

Prisma turned out to be a rare highlight of the Russian Internet circle. In mid-June 2016, the app was just released on iOS, with 7.5 million downloads in 15 days and fire in 40 countries.

The huge success even caught the development team off guard and had to double the speed of the day to improve the server's processing power.

"Looks like the whole of Russia was conquered by us." Moiseyenkov wrote this sentence on Facebbok. On August 2, Prisma received more than 50 million users worldwide.

Russian President Dmitry Medvedev, who has 23 million fans, also became a user of Prisma. He sunk a piece of Prisma on Instgram and quickly gained 87,000.

What Prisma is more advanced than Deep Art is that it greatly reduces image processing time. When the user hasn't reached the billionth order of magnitude, each photo is processed within the Prisma system for only 20 seconds. Secondly, Prisma is a free mobile application. Compared to Deep Art on the web, there is no doubt that there is more user base.

In 20 seconds, in a certain corner of the world, a user uploads photos. His photos are sent to a server in Moscow. Prisma uses artificial intelligence and neural networks for processing, and then returns to the user's mobile phone after being “stylizedâ€.

This speed is top in the industry. Why is it so fast?

"It must have been a bloodslide," an engineer from a well-known domestic face recognition technology company told Deep Blue Blue. "Under the framework that I was building at the time, using the computing power of ordinary laptops, it is possible to make such a map." It takes a few hours."

The German Leon Goettis guessed Deep Blue: "I think they trained a feed-forward neural network to make pictures."

"Prisma does not rely entirely on machine learning, but controls some key content." An insider told Deep Blue: "For example, in a massive amount of user uploads, there must be a considerable proportion of portraits. Compared to the original algorithm, Prisma seems to be better at handling facial details. Maybe they specifically added facial recognition and control."

According to Moysheyenkokov himself, Prisma shares three sets of neural networks. They have a clear division of labor: two groups of neural networks are responsible for style extraction and photo production, and a set of neural networks are used as background to speed up the entire calculation process.

In contrast, Deep Art is more like a craftsman. Leon Goethis thinks his own original algorithm is slower, but he is even better at detailing his expression—“It's a real work of art.†Deep-charged, high-resolution graphics from Deepart.io are comparable to one. Hanging on the wall of the museum.

On the Deep Art homepage, an interface display of work pricing. Picture source: deepart.io

“Their stylized work is a bit weaker than the initial work. I think they are doing some lower level image processing to cover up the lack of stylistic, for example, to strengthen the performance of the edge.†Leon Geithy Sri Lanka told Deep Blue Blue that he believes that Prisma sacrifices artistic quality and seeks speed.

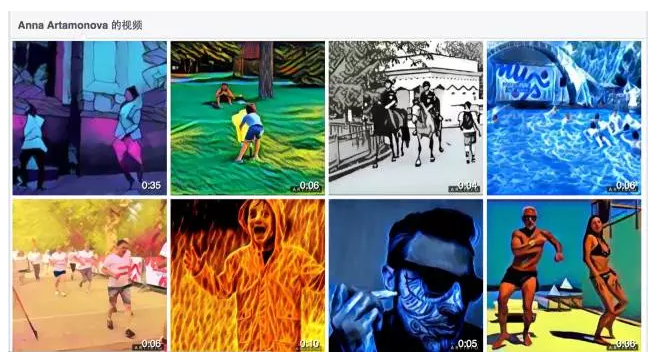

| Crowded Most people had previously speculated that Prisma would introduce more filters to monetize, but after Prisma invented Facebook, the plan for their next step was to make a video. On July 20, 2016, Prisma founder Moysheyenko uploaded a 29-second music video on his official Facebook account. Each frame of this video is rendered in artistic style.

A Prisma artistic effect video. Prisma has released multiple music videos on official Facebook.

However, not only the Prisma family are in the direction of video switching.

Just nine days later, Anna Artamonova, Prisma's angel investor and vice president of Russian Internet giant Mail.Ru, announced on Facebook the release of Prisma's direct-to-competitor Artisto. This is a video processing software combining neural network and artificial intelligence technology, which can add dynamic art effects to video. Although the length of the video cannot exceed 10 seconds, the “moving up†of famous painting style images is indeed pleasing. Atamonov said the video software took only 8 days to develop.

Vice President Atamonova posted a video produced by Artisto on Facebook. Picture source: facebook.com

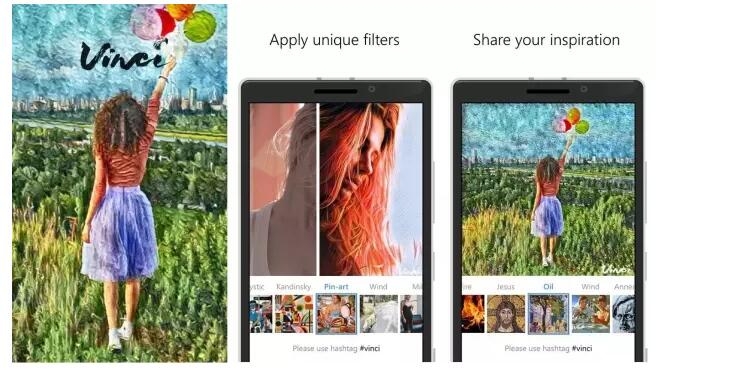

On the second day of the Prisma Android launch, VKontakte, Russia’s largest social networking site, also introduced a Prisma-like product: Vinci, both of which are very similar in function and appearance. Vinci not only reduced the image processing time to 2 seconds, but also quickly opened the iOS and Android market, and covered the Windows Phone field that Prisma could not get involved with. It became the first software to use neural networks on Windows Phone. It is worth mentioning that the social networking site VKontakte is also a product of Mail.Ru.

As of August 2, 2016, in the Russian APP Store free standing list, Artisto topped the list, Vinci ranked second, and Prisma fell to the fifth position.

Screen display software Vinci interface display. Image Source: mspoweruser.com

Not only did Russians think about video, Deep Art and the three Germans also aimed at the video market. Not long ago, Deep Art official website released a demo to start making paid short video. A 720p video (maximum five minutes) costs 249 euros.

Deep Art's products are high-priced and slow-moving, targeting the mid-to-high-end market. On the mass consumer side, the free products Prisma, Vinci, and Artisto won by the Russian internet giant Mail.Ru. Rather than saying that several products are technically contested, it is better to say that this is a strong layout of Internet capital predators.

However, in fact, deep learning is still in its infancy on the video, and it faces three major challenges:

First, the amount of data processed by the video is larger than that of the image, and the requirement for computational power increases exponentially ;

Second, how to maintain consistency of information in the image frames on the time axis, rather than a separate process for each frame image, but also the problem;

Third, the objects in the video are moving all the time, how to track their dynamic changes in space , researchers have not found a good way.

In addition to these "filter applications" that we have inventoried, deep learning has many applications in image processing. In general, the deep learning image application can be divided into two parts according to the process: input and output.

The input can be regarded as "machine vision", that is , the inside of the machine establishes the understanding and recognition of the image - for example, to judge whether the portrait is in the picture, classify the figure in the picture, etc.;

The output is to make further judgments, decisions, and trigger actions , such as autopilot, by analyzing the road information collected by the camera, and giving the control system instructions such as acceleration and parking.

Based on the high accuracy of image recognition, deep learning can accomplish more complex tasks. For example, if Baidu's image search and Weibo’s automatic detection of sensitive words in pictures belong to the computer’s rational cognitive application, then applications like Prisma are to allow computers not only to identify rationally, but also with the help of deep learning. Can also perceptual cognitive pictures, understand the image style and content relationship.

This is the significance of artificial intelligence. The development of computer-awareness determines whether the machine world can truly establish a self-consistent and complete knowledge system and ultimately achieve the replacement, extension and enhancement of human capabilities.

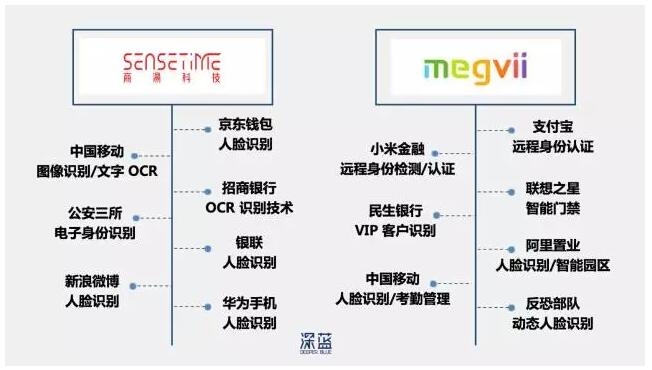

According to domain content, the application of deep learning in the image is divided into several major categories: image recognition, classification, detection, search, feature extraction, and video processing. Among them, face recognition is the fastest breakthrough deep learning image application. As early as 2014, there were multiple startup technology teams that had reached or exceeded the naked eye's recognition rate. The following chart shows:

Companies use their own open sample set tests to submit test results. The results show that Deep ID, a face recognition product developed by Professor Tang Xiaoou's team, has exceeded the naked eye recognition rate. Among them, the small horizontal line above the technical product name, the following is the team name. (dark blue cartography)

Among these companies, Facebook has integrated the results of Deepface into its own products. Today, users upload photos to their Facebook account and the system automatically labels everyone in the image. Deterrence Technology and Prof. Tang Xiaoou are the core technologies of Shangtang Technology. They mainly provide financial, security and other departments with mature identity authentication products. Customers include Alipay, China Merchants Bank, and anti-terrorism forces.

Shang Tang Technology and Trump Technologies main customers comparison chart (dark blue cartography)

Facebook is probably the most ambitious big company in the three giants for deep learning of image applications. According to internal reliable sources, Facebook may open source their latest research results code at the next weekend (August 2016). If you use one of the simplest words to describe Facebook's new breakthrough, called "Using unsupervised learning to make a computer out of nothing, create your own pictures."

An overview of the layout of the three major Internet companies in deep learning. (dark blue cartography)

In the past, people used computer to do image generation using supervised learning, that is, they needed to use a lot of labeled data to train artificial neural networks, and the latter could gradually learn to recognize things. For example, if you look at a picture of 1,000 cats for a computer, after seeing more, the neural network will gradually build a model for the cat and identify other cats' images.

But today, Facebook uses unsupervised learning, allowing computers to autonomously generate sample images of scenes, including airplanes, cars, and birds, and make them believe it.

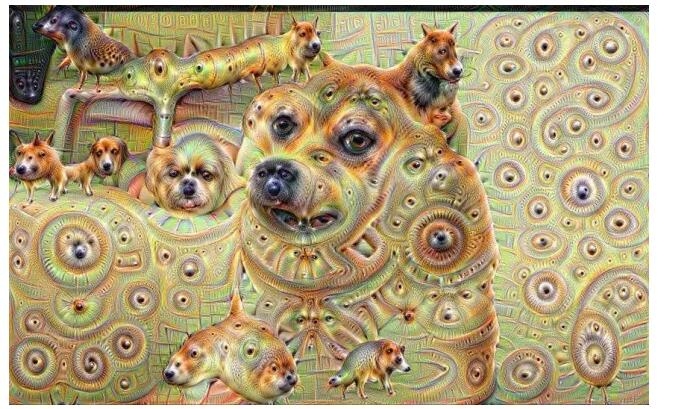

Google’s Deep Dream is a computer that can draw. It automatically recognizes images, filters out certain parts, and exaggerates them to create a psychedelic effect. Six months ago, Deep Dream held a successful exhibition in the Bay Area. Deep Dream imitated the brushstrokes and painting skills of Hans Holbein, a Renaissance German painter 500 years ago, and drew a series of Silicon Valley celebrities. Each painting is enough to let people pull out thousands of dollars for collection.

However, Deep Dream's algorithm can sometimes scare people. If it finds that your facial line is a bit like a dog, then it will draw that area into a complete dog. "It's like eating an LSD. The computer experiences hallucinations. So dogs are everywhere!" said one employee from the Google AI Lab.

Paintings of Google Day Dream. Many areas of the picture were processed into dog's heads and swirls by computers.

In any case, computers are showing us their own dreams.

references:

i, Gatys, Leon, Alexander S. Ecker, and Matthias Bethge. "Texture synthesis using convolutional neural networks." Advances in Neural Information Processing Systems. 2015.

Ii, Gatys, Leon, Alexander S. Ecker, and Matthias Bethge. "A neural algorithm of artistic style." arXiv preprint arXiv: 1508.06576 (2015).

Ii, Wang Xiaogang. "Research progress and prospects of deep learning in image recognition". 2015.

Iii, Venture Scanner. Artificial Intelligence Market Overview. 2016.

Iv, He, Kaiming, et al. "Deep residual learning for image recognition." arXiv preprint arXiv:1512.03385 (2015).

v, Denton, Emily L., Soumith Chintala, and Rob Fergus. "Deep Generative Image Models using a Laplacian Pyramid of Adversarial Networks." Advances in neural information processing systems. 2015.

Vi, Sun, Yi, et al. "Deep learning face representation by joint identification-verification." Advances in Neural Information Processing Systems. 2014.

Vii, Sun, Yi, Xiaogang Wang, and Xiaoou Tang. "Deep learning face representation from predicting 10,000 classes." Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 2014.

Viii, Sun, Yi, et al. "Deepid3: Face recognition with very deep neural networks." arXiv preprint arXiv:1502.00873 (2015).

Lei Feng Network Note: This article is authorized for deep blue DeeperBlue Lei Feng network (search "Lei Feng network" public number concern) , reproduced, please contact the authorize, retain the source and the author, not to delete the content.